Problem framing

Traditional ERP systems often lack the flexibility and real-time intelligence needed for modern enterprise operations, especially when module relationships become dense and difficult to optimize.

This portal packages the published research artifact for AI and Cloud Computing in Business Systems: A Hybrid Model for Enhancing Enterprise Resource Planning into a cleaner public-facing repository, with publication-backed visuals, architecture storytelling, and a data-conscious results dashboard.

The paper frames ERP modernization as a combined AI and cloud problem: the model must understand module-level dependencies, while the deployment model must remain scalable and interpretable in a cloud-hosted business environment.

Traditional ERP systems often lack the flexibility and real-time intelligence needed for modern enterprise operations, especially when module relationships become dense and difficult to optimize.

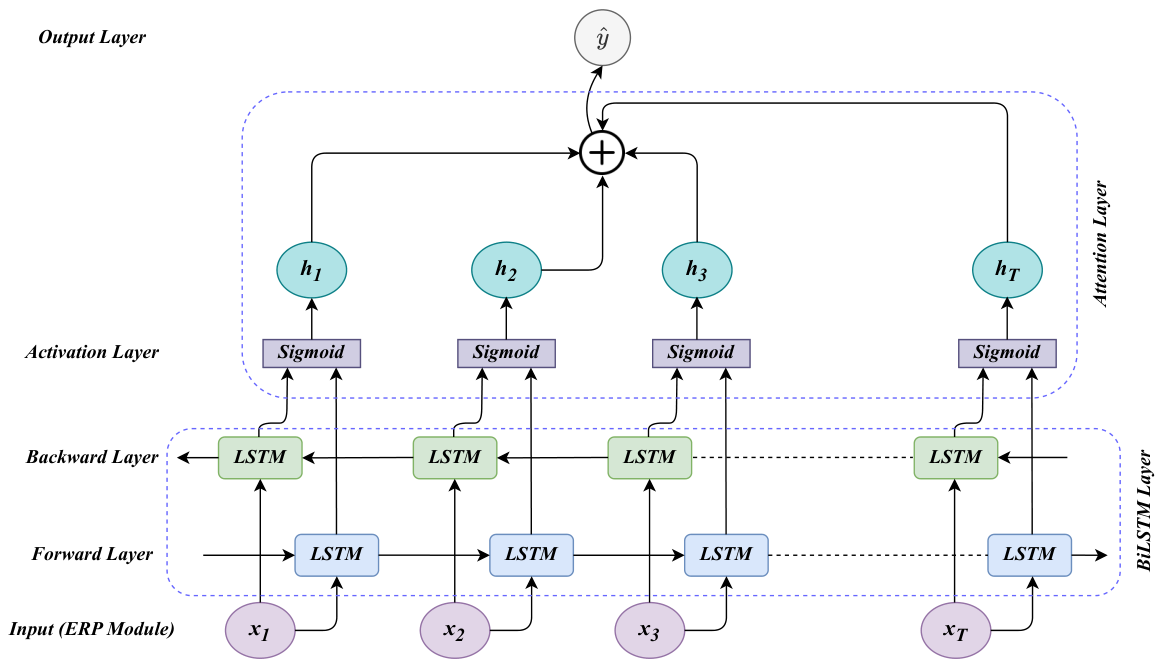

The proposed BiLSTM-Attention architecture captures sequential ERP dependencies from both directions and adds attention weights to expose which modules matter most to the prediction.

The resulting predictions support risk management, intelligent load balancing, structural analysis, and cloud-aware resource decisions in ERP service ecosystems.

The proposed model beats the best non-proposed baseline by 5.9% accuracy points, while the most salient component in the attention analysis is Module_5 (0.17).

The site uses exact values where the paper provides them and clearly labels reconstructed elements only when raw logs are absent from the repository.

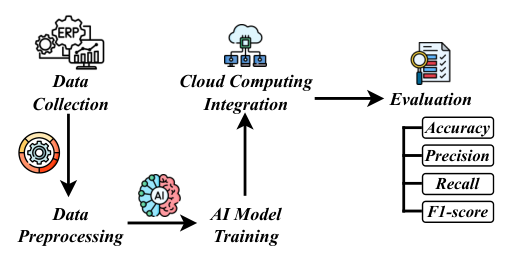

The methodology starts with ERP module dependency metadata, applies sequence-oriented preprocessing, learns contextual interactions with BiLSTM-Attention, and then maps the output to a cloud ERP integration pathway.

The paper references the ERP Module-Table Dependency Dataset and models module codes, table counts, dependency counts, and complexity indicators.

Missing values are handled, categorical data is encoded, numerical features are normalized, and linked modules are converted into ordered sequences.

BiLSTM captures forward and backward sequence context, while attention weights highlight the most influential ERP modules.

These views combine exact reported metrics with charted comparisons and one clearly identified reconstructed training series derived from the published figures.

All bar values come directly from the model comparison table reported in the paper.

Exact attention shares are taken from the module interpretation table. They sum to 1.00.

The proposed model improves across all four reported evaluation dimensions, not just a single metric.

This line chart is reconstructed from the published training figures because raw epoch logs were not included in the repository.

The README and website both surface the required visualization set. Every chart here is grounded in the paper's reported tables, attention scores, or clearly marked reconstructed training traces.

Distribution of the 10 exact attention scores reported for ERP modules.

Share view of the same exact module attention weights, highlighting Module_5 as the dominant component.

Small-sample violin view of reported metric distributions across traditional ML, sequence DL, and proposed model families.

Matrix rendering of the exact model comparison table from the paper.

Scatter-matrix view of the four evaluation metrics across the five published models.

Precision versus recall relationship with marginal histograms, built from exact table values.

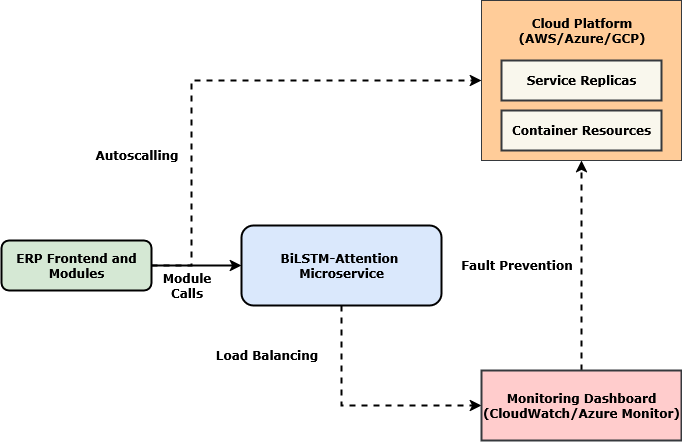

The paper does not claim a production deployment, but it formalizes how attention-aware dependency prediction can support cloud ERP operations in realistic service environments.

High-dependency modules can be identified earlier and assigned more compute or routing priority in cloud-hosted ERP systems where module demand varies sharply.

Modules with consistently high attention can be monitored as possible dependency hubs or failure multipliers inside complex ERP ecosystems.

The paper suggests that dependency-aware compute weighting could support more transparent cost attribution for modules with heavier computational paths.

R_i = alpha_i * C_iCompute allocation is scaled by the attention-informed importance of each module.

D_k = sum I(M_k in T_i) * alpha_ikThe paper uses this formalism to discuss fault-prone central dependencies in ERP workflows.

f_theta: T -> c -> y_hatThe BiLSTM-Attention engine is framed as a stateless service that can interface with modular ERP services.

The model is experimentally validated for prediction quality. The operational cloud use cases remain a paper-backed integration pathway, not a claimed deployed production system.

The accepted manuscript package for this ERP research portal follows the same public-access pattern as the follow-up site: profile confirmation, video/channel confirmation, author email request, and encrypted archive delivery.

Gannon University, International University of Business Agriculture and Technology, and Lamar University

The paper combines AI and cloud computing to improve ERP adaptability, predictive insight, and scalability. A BiLSTM-Attention model is used to learn ERP module relationships and support modular analysis in cloud-hosted enterprise systems.

The reported results reach 91.2% accuracy, 89.5% precision, 90.8% recall, and 90.1% F1-score, with Module_5 receiving the highest attention score at 0.17.

The accepted manuscript section is now protected with encrypted archive downloads, while the public portal continues to expose the figures, metrics, HTML report, poster, and implementation notes.

PDF and LaTeX source packages are available through the secure gate below, matching the follow-up manuscript-access workflow.

The repository also links to the author's public video overview, giving visitors a second way to understand the motivation, architecture, and outcomes of the work.

The site already exposes the paper, source files, and charted results. The embedded overview adds a communication layer for readers who want a faster narrative path through the same published work.

The project has been reorganized so the paper, source files, chart assets, and supporting pages are easier to discover and reuse.

The public website now exposes the accepted manuscript through encrypted archives only, matching the protected access flow used in the follow-up project.

Exact metrics, attention scores, and reconstructed training curves are published as CSV and JSON for traceable reuse.

Beyond the main portal, the site now includes a narrative HTML report, a deployment-aware implementation guide, and a poster-style summary page.

This site now highlights the lead author and collects all public profiles in one section for verification, citation, collaboration, and manuscript-password requests.

Professional profile and engineering background.

linkedin.com/in/md-anisur-rahman-chowdhury-15862420aPublic repositories, research artifacts, and ongoing engineering work.

github.com/ANIS151993Publication and citation profile.

scholar.google.com/citations?user=NQyywPoAAAAJPersonal site and portfolio showcase.

marcbd.siteAcademic network profile for research visibility and collaboration.

researchgate.net/profile/Md-Anisur-Rahman-ChowdhuryPassword requests and research inquiries can be sent directly by email.

engr.aanis@gmail.comCitation files are available in both BibTeX and GitHub-native formats, and the repository now makes the key publication paths obvious.

@inproceedings{chowdhury2025erpcloudai,

author = {Md Anisur Rahman Chowdhury and Khandakar Rabbi Ahmed and Kefei Wang and Sabrina Mohona and Shahriar Alam Robin and Shah Tawkir Nesar},

title = {AI and Cloud Computing in Business Systems: A Hybrid Model for Enhancing Enterprise Resource Planning},

booktitle = {2025 9th International Conference on Computational System and Information Technology for Sustainable Solutions (CSITSS)},

pages = {1--6},

year = {2025},

url = {https://ieeexplore.ieee.org/abstract/document/11295090},

note = {IEEE Xplore document 11295090}

}Repository citation metadata is also published via `CITATION.cff`.